AI code review is becoming part of the modern developer workflow.

Tools like GitHub Copilot can now help review pull requests, suggest changes, and assist with code review workflows directly inside GitHub. At the same time, developer adoption of AI tools continues to grow, but trust is not growing at the same speed. According to the Stack Overflow 2025 Developer Survey, 84% of respondents use or plan to use AI tools, while positive sentiment toward AI tools dropped to around 60%.

That creates a very important problem for Java developers:

AI can review code, but can it catch the bugs that actually break production?

Sometimes, yes.

But only if you use it correctly.

A basic AI review can help you find obvious issues: duplicated code, naming problems, missing null checks, simple refactoring opportunities, or inconsistent formatting.

But in real Java backend systems, the most dangerous bugs are rarely that simple.

They usually hide in:

- business logic

- edge cases

- transactions

- data consistency

- authorization

- performance

- missing tests

- unclear API contracts

- production observability

This is where senior engineering judgment still matters.

AI should not replace your pull request review process. It should make it stronger.

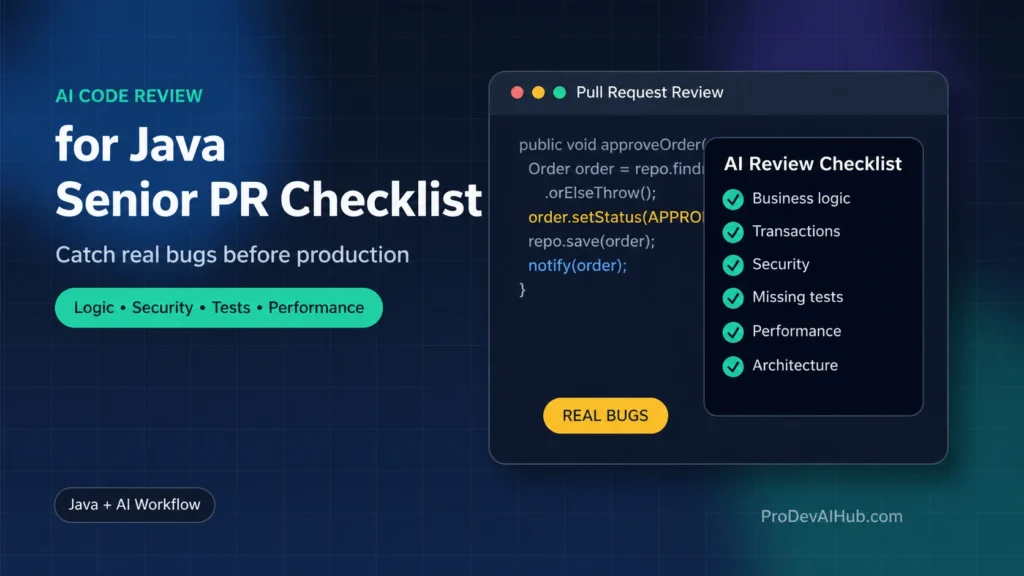

In this article, you’ll learn a practical senior-level checklist for reviewing Java pull requests with AI, so you can catch real bugs before they reach production.

Want the ready-to-use version? Download the Java AI PR Review Pack (2026):

https://prodevaihub.com/java-ai-pr-review-pack/

Why AI Code Review Is Useful, but Not Enough

AI code review is useful because it gives you a fast second pair of eyes.

It can summarize a pull request, explain a diff, suggest improvements, and highlight common issues. GitHub also documents Copilot code review workflows for requesting reviews and configuring code review assistance in pull requests.

But AI has one big weakness:

It does not always understand the real production context.

A Java method can look clean and still be dangerous.

A Spring Boot service can pass unit tests and still break a business rule.

A pull request can have good naming, good formatting, and good structure — but still introduce a bug that corrupts data, leaks sensitive information, or creates inconsistent behavior.

That is why the question should not be:

“Can AI review this code?”

The better question is:

“Can AI help me think like a senior reviewer?”

The answer is yes — if you guide it with the right checklist.

The Senior Java PR Review Mindset

A junior review often focuses on syntax, style, and whether the code “looks good.”

A senior review focuses on risk.

Before reviewing a Java pull request, ask:

- What can break in production?

- What data can become inconsistent?

- What user scenario was not tested?

- What assumption is hidden in this code?

- What happens if an external system fails?

- What happens if this method is called twice?

- What happens if the input is null, empty, duplicated, expired, unauthorized, or unexpected?

This is where AI becomes powerful.

Not because it magically knows the answer.

But because it can help you systematically inspect the pull request from multiple angles.

A good AI-assisted review should not only say:

“This code looks good.”

It should help you answer:

“What are the highest-risk issues in this Java PR?”

That is the difference between a shallow AI review and a senior AI-assisted review.

The 8-Part AI Code Review Checklist for Java

Use this checklist when reviewing Java or Spring Boot pull requests with AI.

You can paste the PR diff, relevant files, or code snippets into your AI tool and ask it to review the code through each of these lenses.

1. Business Logic and Edge Cases

The first thing to check is not syntax.

It is business behavior.

Many production bugs happen because the code works for the happy path but fails for real-world scenarios.

Ask:

- Does the code respect the business rule?

- What happens when the input is null?

- What happens when the input is empty?

- What happens when the value is negative?

- What happens when the entity is already processed?

- What happens when the same action is triggered twice?

- What happens when the status is invalid?

- What happens when the user does not have permission?

- What happens when the database contains unexpected data?

Example AI prompt:

Review this Java code for business logic bugs and edge cases.Focus on:

- incorrect assumptions

- missing validation

- invalid state transitions

- duplicate actions

- unexpected input scenarios

- cases where the happy path works but production can failReturn only high-impact issues.

This is especially important in Java backend systems where business rules are often spread across services, controllers, validators, repositories, and external integrations.

A method can look simple but still allow an invalid workflow.

For example:

- approving an order twice

- refunding an already refunded payment

- activating a disabled user

- updating a record without checking ownership

- applying a discount more than once

- skipping a required validation step

These are the types of issues a senior reviewer should catch.

2. API Contracts and Backward Compatibility

In backend development, a small change can break another system.

This is especially true when your Java application exposes REST APIs, consumes external APIs, or shares DTOs with frontend applications.

Ask:

- Did the PR rename a JSON field?

- Did it remove a field that clients still use?

- Did it change the meaning of an existing field?

- Did it change an HTTP status code?

- Did it change the request or response format?

- Did it make an optional field required?

- Did it introduce a new validation rule that can break existing clients?

- Did it change the error response structure?

Example AI prompt:

Review this Java Spring Boot PR for API contract and backward compatibility risks.Check whether the changes can break existing clients, frontend applications, integrations, or automated consumers.Focus on:

- DTO changes

- JSON field changes

- HTTP status codes

- validation changes

- request and response compatibility

- error response structure

This matters because many API-breaking changes are not obvious in the pull request.

A developer may think they only renamed a field for clarity.

But if that field is part of a public API contract, the change can break production consumers.

For Java and Spring Boot applications, pay close attention to:

- controller method signatures

- request DTOs

- response DTOs

- validation annotations

- enum values

- serialized field names

- exception handlers

- API documentation

A senior reviewer does not only review the code.

They review the contract.

3. Transactions and Data Consistency

This is one of the most important areas in Java backend code review.

Many serious production bugs come from partial updates, missing transactions, incorrect rollback behavior, or inconsistent data after an exception.

Ask:

- Is

@Transactionalneeded? - Is

@Transactionalplaced at the right level? - Are multiple database writes part of the same business operation?

- What happens if the second write fails?

- What happens if an external API call fails after a database update?

- Are exceptions handled in a way that prevents rollback?

- Could the system save partial data?

- Could the same operation run concurrently and create inconsistent results?

Example AI prompt:

Review this Java service for transaction and data consistency issues.Focus on:

- missing @Transactional

- wrong transaction boundaries

- partial updates

- rollback risks

- multiple dependent writes

- external calls inside transactions

- concurrency problems

- inconsistent entity state after failure

This is where AI often needs guidance.

If you simply ask “review this code,” the AI may only comment on naming or readability.

But if you explicitly ask about transactions and consistency, it is much more likely to find real risks.

For example, imagine a service method that:

- updates an order status

- saves a payment record

- sends a confirmation email

- updates inventory

If step 3 fails, what should happen?

Should the order still be approved?

Should the payment be saved?

Should inventory be reduced?

Should the operation retry?

Should the email be sent asynchronously?

These are senior-level questions.

And they are exactly the kind of questions your AI review should help you ask.

4. Security and Data Exposure

Security bugs are not always obvious.

A pull request can look clean but still introduce a serious vulnerability.

Ask:

- Does the code check authorization?

- Can a user access another user’s data?

- Are sensitive fields exposed in the response?

- Are secrets, tokens, emails, phone numbers, or personal data logged?

- Are inputs validated?

- Are file uploads restricted?

- Are permissions checked at the right layer?

- Can the user manipulate an ID in the request?

- Is there a risk of injection?

- Are error messages exposing internal details?

Example AI prompt:

Review this Java Spring Boot code for security and data exposure risks.Focus on:

- missing authorization checks

- insecure direct object access

- sensitive data in logs

- sensitive data in API responses

- weak input validation

- injection risks

- unsafe error messages

- permission bypass scenariosPrioritize issues that could impact production users.

For backend Java developers, one of the most common risks is authorization logic.

For example:

GET /api/orders/{orderId}

The code may correctly find the order by ID.

But does it verify that the authenticated user owns that order?

If not, this can become a serious access control bug.

AI can help detect these risks, but only if you ask it to review the code from a security perspective.

Never rely on a generic AI review for security-sensitive code.

Use a dedicated security checklist.

5. Error Handling and Observability

A production bug is much harder to fix when the system gives you no useful signal.

That is why error handling and observability are part of a serious PR review.

Ask:

- Are exceptions swallowed silently?

- Are exceptions logged with enough context?

- Are logs too noisy?

- Do logs expose sensitive data?

- Are error messages useful for debugging?

- Are user-facing errors clear but safe?

- Is the right exception type used?

- Are retries needed?

- Are failures visible in monitoring?

- Can support or operations teams understand what happened?

Example AI prompt:

Review this Java code for error handling and observability issues.Focus on:

- swallowed exceptions

- missing logs

- noisy logs

- sensitive data in logs

- unclear error messages

- wrong exception handling

- missing failure context

- production debugging risks

Bad error handling often looks harmless during review.

For example:

try {

paymentService.charge(order);

} catch (Exception e) {

log.warn("Payment failed");

}Code language: PHP (php)This might look acceptable at first glance.

But what is missing?

- Which order failed?

- Which user was affected?

- Was the payment retried?

- Was the order status updated?

- Should the exception stop the workflow?

- Is the warning enough?

- Is support able to trace the issue?

A senior reviewer does not only check whether the exception is caught.

They check whether the failure behavior is correct.

6. Performance and Database Access

Performance problems often enter production through normal-looking pull requests.

The code works during local testing.

It works with five records.

But it fails with 500,000 records.

Ask:

- Is there a database call inside a loop?

- Is there a possible N+1 query problem?

- Is pagination needed?

- Can this collection become large?

- Is the code loading too much data into memory?

- Is the query indexed?

- Is sorting done in memory instead of the database?

- Are external API calls made inside a loop?

- Is caching needed?

- Is the method called frequently?

Example AI prompt:

Review this Java code for performance and database access risks.Focus on:

- N+1 queries

- database calls inside loops

- missing pagination

- large collections

- unnecessary memory usage

- inefficient repository calls

- repeated external API calls

- scalability risks in production

This is particularly important for Java applications using:

- Spring Data JPA

- Hibernate

- REST clients

- batch jobs

- scheduled jobs

- reporting queries

- large datasets

- microservice calls

A pull request can be correct functionally but still dangerous operationally.

Example:

for (Customer customer : customers) {

List<Order> orders = orderRepository.findByCustomerId(customer.getId());

// process orders

}Code language: PHP (php)This may work in development.

But in production, it can create hundreds or thousands of queries.

AI can help spot this pattern, but again, only if you ask it to look for performance and data access risks.

7. Test Coverage That Actually Protects Production

Test coverage is not the same as production protection.

A pull request can include tests and still miss the dangerous cases.

Ask:

- Do the tests cover only the happy path?

- Is there a test for invalid input?

- Is there a test for duplicate actions?

- Is there a test for unauthorized access?

- Is there a test for transaction rollback?

- Is there a test for external service failure?

- Is there a test for empty results?

- Is there a test for boundary values?

- Is there a test for concurrency or repeated calls?

- Are the assertions meaningful?

Example AI prompt:

Review the tests in this Java PR.Focus on:

- missing edge case tests

- missing failure scenarios

- missing authorization tests

- weak assertions

- tests that only verify implementation details

- missing integration tests

- scenarios that could still break productionSuggest the most important tests to add.

A weak test checks that a method was called.

A stronger test checks that the business behavior is correct.

For example, this is weak:

verify(orderRepository).save(order);Code language: CSS (css)This is stronger:

assertThat(order.getStatus()).isEqualTo(OrderStatus.APPROVED);

assertThat(order.getApprovedAt()).isNotNull();Code language: CSS (css)But even that may not be enough.

You may also need to test:

- order already approved

- order cancelled

- payment failed

- user not authorized

- inventory unavailable

- duplicate request

- database rollback

A senior reviewer uses AI to find missing tests, not just to check existing tests.

8. Maintainability and Architecture

Finally, review the long-term impact.

A pull request should not only work today.

It should still be understandable and maintainable six months from now.

Ask:

- Is the controller doing business logic?

- Is the service doing too many things?

- Is the method too long?

- Is the class becoming a “God class”?

- Is the code introducing unnecessary coupling?

- Is the domain logic hidden in mapping code?

- Is the same rule duplicated in multiple places?

- Is the abstraction useful or over-engineered?

- Does the change fit the existing architecture?

- Will the next developer understand this code quickly?

Example AI prompt:

Review this Java PR for maintainability and architecture risks.Focus on:

- business logic in controllers

- oversized services

- duplicated rules

- unnecessary coupling

- unclear responsibilities

- over-engineering

- hidden complexity

- code that will be hard to change later

This part is important because AI often suggests refactoring.

But not every refactoring is useful.

A senior reviewer should evaluate whether the change makes the system simpler or more complex.

The goal is not to make the code look clever.

The goal is to make the system easier to understand, test, and safely change.

Example: A Java PR That Looks Fine but Contains Real Bugs

Let’s look at a simplified example.

Imagine a pull request adds this method:

public void approveOrder(Long orderId) {

Order order = orderRepository.findById(orderId)

.orElseThrow(() -> new OrderNotFoundException(orderId));

order.setStatus(OrderStatus.APPROVED);

order.setApprovedAt(LocalDateTime.now());

orderRepository.save(order);

notificationService.sendOrderApprovedEmail(order);

}Code language: JavaScript (javascript)At first glance, this code looks fine.

It loads an order, updates the status, saves it, and sends an email.

A basic AI review might say:

The code is clear and readable. Consider adding logging and unit tests.

That is not wrong.

But it is not enough.

A senior AI-assisted review should catch deeper issues.

What Could Break in Production?

1. The order may already be approved

The method does not check the current status.

If the same order is approved twice, the system may send duplicate emails or trigger duplicate downstream actions.

2. The order may be cancelled

The method does not prevent approving an order that should no longer be valid.

3. There is no visible transaction boundary

If the save succeeds but the notification fails, the system may end up with an approved order but no notification.

That may or may not be acceptable.

But the behavior should be intentional.

4. Authorization is not checked

Can any authenticated user approve any order?

Should only an admin or internal process be allowed to approve it?

5. There is no test for invalid state transitions

The happy path is not enough.

The PR should include tests for already approved, cancelled, missing, and unauthorized scenarios.

6. The notification may create unwanted side effects

If the method is retried, will the email be sent again?

Should notification be asynchronous?

Should it be idempotent?

This is the kind of review that catches real bugs.

A Better AI Review Prompt for This PR

Instead of asking:

Review this code.

Ask:

Review this Java Spring Boot PR as a senior backend engineer.Focus only on production risks:

- business logic bugs

- invalid state transitions

- missing authorization checks

- transaction and rollback issues

- duplicate actions

- notification side effects

- missing tests

- data consistency problemsFor each issue, provide:

1. the risk

2. why it matters

3. the suggested fix

4. the test that should be addedDo not focus on formatting unless it hides a real bug.

This prompt changes the review quality.

You are not asking AI to “look at the code.”

You are asking AI to think through production failure modes.

That is the real value.

A Better Structure for AI Code Review Output

When using AI for pull request reviews, ask for structured output.

For example:

Return the review as a table with these columns:- Risk Area

- Issue Found

- Why It Matters

- Suggested Fix

- Test to Add

- Severity

This makes the result easier to use in a real review.

Example output format:

| Risk Area | Issue Found | Why It Matters | Suggested Fix | Test to Add | Severity |

|---|---|---|---|---|---|

| Business Logic | No check for already approved orders | Can trigger duplicate approval behavior | Validate current status before approving | approveOrder_shouldFail_whenOrderAlreadyApproved | High |

| Security | No authorization check | Unauthorized users may approve orders | Check user role or ownership | approveOrder_shouldFail_whenUserUnauthorized | High |

| Data Consistency | Save happens before notification | Partial success may create inconsistent workflow | Decide whether notification failure should rollback or be async | approveOrder_shouldHandleNotificationFailure | Medium |

| Tests | Only happy path covered | Edge cases can break production | Add state transition tests | cancelledOrder_shouldNotBeApproved | High |

This structure helps you turn AI feedback into real engineering action.

What AI Should Not Decide Alone

AI can help you review a pull request faster.

But it should not make the final decision alone.

A human reviewer should still decide:

- whether the business behavior is correct

- whether the architecture is acceptable

- whether the risk is worth taking

- whether the tests are sufficient

- whether the security model is respected

- whether the code should be merged now or reworked

- whether the system impact is fully understood

AI is useful for acceleration.

But accountability still belongs to the engineering team.

This is especially true for Java backend systems that handle:

- payments

- customer data

- internal business workflows

- access control

- financial records

- production integrations

- regulated data

- critical batch jobs

For this kind of code, AI should be part of the review process, not the owner of the decision.

How to Add AI Code Review to Your PR Process

Here is a practical workflow you can use.

Step 1: Ask AI for a PR Summary

Start with a simple summary.

Summarize this pull request.Explain:

- what changed

- which files are most important

- what business behavior may be affected

- which areas need careful review

This helps you understand the PR faster.

But do not stop there.

A summary is not a review.

Step 2: Run the Senior Checklist

Next, run a structured review.

Review this PR using this checklist:1. Business logic and edge cases

2. API contract and backward compatibility

3. Transactions and data consistency

4. Security and data exposure

5. Error handling and observability

6. Performance and database access

7. Test coverage

8. Maintainability and architecture

Return only issues that could create real production risk.

This is where AI becomes more valuable.

Step 3: Ask for Missing Tests

After reviewing the code, ask AI specifically about tests.

Based on this PR, what tests are missing?Focus on:

- edge cases

- invalid states

- failure scenarios

- authorization

- transaction rollback

- duplicate requests

- external service failuresGive me concrete test method names and what each test should verify.

This is one of the best ways to use AI in code review.

It helps you move from “the code looks fine” to “the risk is covered.”

Step 4: Review the AI Review

Do not blindly accept the AI output.

Review it like you would review a junior developer’s comments.

Ask:

- Is this issue real?

- Is the suggested fix safe?

- Is the recommendation over-engineered?

- Did AI miss the real risk?

- Did AI misunderstand the business rule?

- Did AI hallucinate a problem that does not exist?

AI review is input.

Not final judgment.

Step 5: Leave Human-Friendly PR Comments

Finally, turn the useful findings into clear PR comments.

A good PR comment should be specific and actionable.

Instead of writing:

This looks risky.

Write:

This method approves the order without checking the current status.

Could we add a guard to prevent approving an already approved or cancelled order?

I’d also add tests for APPROVED and CANCELLED states.

This is much more useful.

It explains:

- what the issue is

- why it matters

- what to change

- what test to add

That is the kind of review that improves code quality without creating unnecessary friction.

Recommended AI Code Review Prompt for Java

Here is a reusable prompt you can adapt for your own pull requests:

Act as a senior Java backend engineer reviewing this pull request.Your goal is to find real production risks, not style preferences.Review the code for:1. Business logic bugs

2. Edge cases

3. Invalid state transitions

4. API contract or backward compatibility issues

5. Transaction and rollback problems

6. Data consistency risks

7. Security and authorization issues

8. Sensitive data exposure

9. Error handling problems

10. Observability gaps

11. Performance and database access issues

12. Missing or weak tests

13. Maintainability and architecture risksFor each issue, provide:- Risk area

- Issue found

- Why it matters

- Suggested fix

- Test to add

- Severity: Low, Medium, High, CriticalIgnore formatting comments unless they hide a real bug.

Prioritize issues that could break production.

You can use this prompt with:

- GitHub Copilot Chat

- ChatGPT

- Claude

- Gemini

- Cursor

- JetBrains AI Assistant

- any AI coding assistant that can analyze code context

The tool matters less than the review structure.

Common Mistakes When Using AI for Code Review

AI code review can be helpful, but many developers use it too vaguely.

Here are common mistakes to avoid.

Mistake 1: Asking “Review This Code”

This prompt is too broad.

It usually produces generic feedback.

Better:

Review this Java code for production risks: business logic, transactions, security, performance, and missing tests.

Mistake 2: Accepting AI Feedback Without Validation

AI can be wrong.

It can misunderstand the business context.

It can suggest unnecessary abstractions.

It can miss a critical edge case.

Treat AI feedback as a starting point, not a final answer.

Mistake 3: Focusing Only on Clean Code

Clean code is important.

But a clean bug is still a bug.

A well-named method can still approve the wrong order.

A readable service can still miss a transaction.

A beautiful refactor can still break an API contract.

Use AI to review correctness before style.

Mistake 4: Ignoring Tests

One of the best uses of AI is test discovery.

Ask it:

What tests are missing from this PR?

Then ask:

Which missing test would catch the most expensive production bug?

This forces the review to focus on risk.

Mistake 5: Not Giving AI Enough Context

AI performs better when it has context.

For serious reviews, include:

- the PR diff

- related service methods

- DTOs

- controller endpoints

- entity relationships

- test files

- business rules

- expected behavior

- known constraints

A review without context is shallow.

A review with context can be much more useful.

When AI Code Review Works Best

AI-assisted code review works best when:

- the PR is not too large

- the reviewer gives clear instructions

- the checklist is specific

- the business context is included

- the developer validates the output

- AI is used to find risks, not just style issues

- tests are reviewed carefully

- humans keep final responsibility

If your team wants better reviews, do not just add AI.

Add a better review workflow.

Download the Java AI PR Review Pack (2026)

If you want a ready-to-use version of this workflow, I created the Java AI PR Review Pack (2026).

It is a practical 2-page checklist designed to help Java developers review pull requests with AI without missing real production risks.

Use it to check:

- business logic

- edge cases

- transactions

- security

- performance

- tests

- maintainability

- AI prompts for PR review

Download it here:

https://prodevaihub.com/java-ai-pr-review-pack/

More Resources for Java Developers Using AI

If you want to go deeper into practical AI workflows for developers, here are more resources.

Java AI PR Review Pack

A practical checklist to review Java pull requests with AI and catch real backend risks.

https://prodevaihub.com/java-ai-pr-review-pack/

Freelance Tech & AI Guide

If you want to use AI to save time, build better workflows, and create more opportunities as a tech professional, check out the guide here:

ProDevAIHub Newsletter

Get practical AI workflows for Java, backend development, and real developer productivity.

https://prodevaihub.com/newsletter

ProDevAIHub YouTube Channel

For video tutorials, backend AI workflows, and practical examples for Java developers, subscribe here:

https://www.youtube.com/@ProDevAIHub

Conclusion: AI Code Review Is a Workflow, Not a Magic Button

AI can help Java developers review code faster.

But speed is not the main goal.

The real goal is to catch the bugs that matter.

The bugs that break production are often hidden in business logic, transactions, authorization, data consistency, performance, and missing tests.

That is why AI code review needs a senior checklist.

Do not ask AI whether the code “looks good.”

Ask it what can break.

Ask it what edge cases are missing.

Ask it what tests should be added.

Ask it where the production risk is.

That is how AI becomes useful in real Java pull request reviews.

Not as a replacement for senior engineers.

But as a tool that helps senior engineers review with more structure, more speed, and more focus.

Download the Java AI PR Review Pack (2026) and use it in your next pull request review:

https://prodevaihub.com/java-ai-pr-review-pack/

FAQ

Can AI review Java code effectively?

Yes, AI can help review Java code effectively when it is guided with a clear checklist. It can identify common issues, summarize changes, suggest tests, and highlight possible risks. However, it should not replace human review, especially for business logic, security, transactions, and architecture decisions.

What should a senior Java developer check in a pull request?

A senior Java developer should check business logic, edge cases, API compatibility, transactions, data consistency, security, error handling, performance, tests, maintainability, and architectural impact.

Can GitHub Copilot review pull requests?

Yes. GitHub provides Copilot code review features that can assist with pull request reviews and code review workflows. You can learn more in the official GitHub Copilot documentation.

What are the biggest risks of AI-generated Java code?

The biggest risks are incorrect business logic, missing edge cases, weak tests, security issues, transaction problems, performance issues, and code that looks correct but does not behave correctly in production.

Should AI replace human code reviewers?

No. AI should assist human reviewers, not replace them. Human developers are still responsible for understanding business rules, validating architecture, checking security impact, and making the final merge decision.

How do I make AI code review more useful?

Use specific prompts. Instead of asking “review this code,” ask AI to review the PR for production risks, business logic bugs, transaction issues, security problems, performance risks, and missing tests.

Sources and Further Reading

- Stack Overflow Developer Survey 2025 — AI usage and sentiment:

https://survey.stackoverflow.co/2025/ - Stack Overflow Developer Survey 2025 — AI section:

https://survey.stackoverflow.co/2025/ai - GitHub Docs — GitHub Copilot code review features:

https://docs.github.com/en/enterprise-cloud@latest/copilot/get-started - GitHub Docs — Pull request reviews:

https://docs.github.com/en/enterprise-server@3.20/pull-requests/collaborating-with-pull-requests/proposing-changes-to-your-work-with-pull-requests/requesting-a-pull-request-review